“La pensée n’est qu’un éclair au milieu d’une longue nuit. Mais c’est cet éclair qui est tout.”

Thought is only a flash in the middle of a long night. But this flash means everything.

– Henri Poincaré (1854-1912), La valeur de la science.

Karim Barigou

Since September 2024, I am Professor of Actuarial Sciences at the Louvain School of Management (LSM) and the Louvain Institute for Multidisciplinary Analysis and Quantitative Modeling (LIDAM) at Université catholique de Louvain (UCLouvain).

My research interests are in life insurance and its interplay with quantitative finance. My recent work covers diverse areas such as mortality modelling with epidemic and climate shocks, longevity risk management, pricing and hedging variable annuities, and the application of statistical learning techniques in life insurance.

Previously, I was Professor in the School of Actuarial Science at Université Laval (Quebec, Canada). I also worked as a postdoctoral researcher at ISFA, University Lyon 1 (2019-2022), focusing on longevity risk online detection and model selection with Stéphane Loisel and Yahia Salhi. I earned my Ph.D. in Business Economics from KULeuven in 2019, where my research under the supervision of Jan Dhaene focused on combining market-consistent and actuarial valuation methods for life insurance liabilities.

Open-Source SofTware

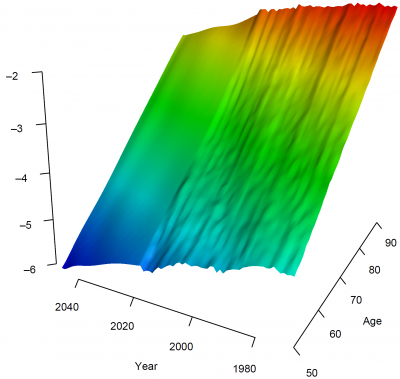

StanMoMo: An R package for Bayesian Mortality Modelling with Stan

Glad to share a new R package for Bayesian Mortality Modelling. The package provides functions to fit and forecast standard mortality models (Lee-Carter, APC, CBD, etc) in a full Bayesian setting. The package also includes functions for model selection and model averaging based on leave-future-out validation.

Most Recent Papers

Mortality Modeling and Forecasting with the Actuaries Climate Index

This paper proposes a novel framework for integrating climate-related variables into mortality modeling. Using the Actuaries Climate Index (ACI), we adopt a two-step approach: first, seasonal mortality patterns are modeled with an extended Lee-Carter structure incorporating SARIMA processes and cyclic splines; second, residual variations are explained by ACI components through statistical and machine learning methods (GLMs, GAMs, and XGBoost). Additionally, a SARIMA-Copula model captures dependence between mortality trends and temperature extremes. Results demonstrate that including climate indicators significantly improves forecasting accuracy, providing actuaries and policymakers with a robust tool to manage climate-related mortality risks.

A Penalized Distributed Lag Non-Linear Lee-Carter Framework for Regional Weekly Mortality Forecasting

This paper introduces a novel extension of the Lee–Carter model that integrates age- and region-specific seasonal effects with penalized distributed lag non-linear components, capturing delayed and non-linear impacts of heat, cold, and influenza. The framework models mortality using a negative binomial distribution to handle overdispersion, and incorporates SARIMAX processes for latent dynamics and copulas for cross-regional dependencies. Applied to French regional data from 1990 to 2019, the model delivers well-calibrated forecast distributions and outperforms standard benchmarks. It reveals strong heterogeneity in temperature and influenza effects across age groups and regions, offering valuable insights for public health planning and actuarial forecasting.

Granular mortality modeling with temperature and epidemic shocks: a three-state regime-switching approach

How do temperature extremes and epidemics impact mortality beyond seasonal trends? This study introduces a novel regime-switching framework to model deviations in mortality driven by environmental and respiratory shocks. Our model identifies three distinct states: a baseline state capturing regular seasonal patterns, an environmental shock state reflecting excess mortality during heatwaves, and a respiratory shock state addressing spikes caused by influenza and COVID-19 outbreaks. Using weekly mortality data from 21 French regions across six age groups, we model state transitions with dynamic predictors, including lagged temperature and influenza incidence rates. Through scenario-based projections and bootstrap uncertainty quantification, this framework provides valuable insights for healthcare planning and risk management, helping hospitals and policymakers prepare for future mortality shocks.

Information-Neutral Hedging of Derivatives Under Market Impact and Manipulation Risk

This paper examines derivative pricing and hedging in illiquid markets where large traders’ order flow creates persistent price effects. We address a key limitation of existing models by separating informational order-flow effects from mechanical price impact and transaction costs using a counterfactual informed observer. Within this framework, we identify information-neutral probability measures and develop a hedging approach that accounts for both transaction costs and permanent market impact. Numerical results show that non-linear execution costs can lead to significant deviations from the Black–Scholes hedge for an out-of-the-money call option.

Expected Utility Optimization with Convolutional Stochastically Ordered Returns

This paper expands the theoretical framework by considering investment returns modeled by a stochastically ordered family of random variables under the convolution order, including Poisson, Gamma, and exponential distributions. Utilizing fractional calculus, we derive explicit, closed-form expressions for the derivatives of expected utility for various utility functions, significantly broadening the potential for analytical and computational applications. We apply these theoretical advancements to a case study involving the optimal production strategies of competitive firms, demonstrating the practical implications of our findings in economic decision making.

Bayesian mortality modelling with pandemics: a vanishing jump approach

We propose novel single- and multi-population mortality models with vanishing jumps, where the impact of shocks is strongest initially and gradually fades over time. Existing models typically assume either transitory one-period jumps or permanent shifts. Using data from COVID-19 and the World Wars, our Bayesian approach outperforms transitory shock models in both in-sample and out-of-sample tests, offering a more realistic representation of mortality dynamics during pandemics.

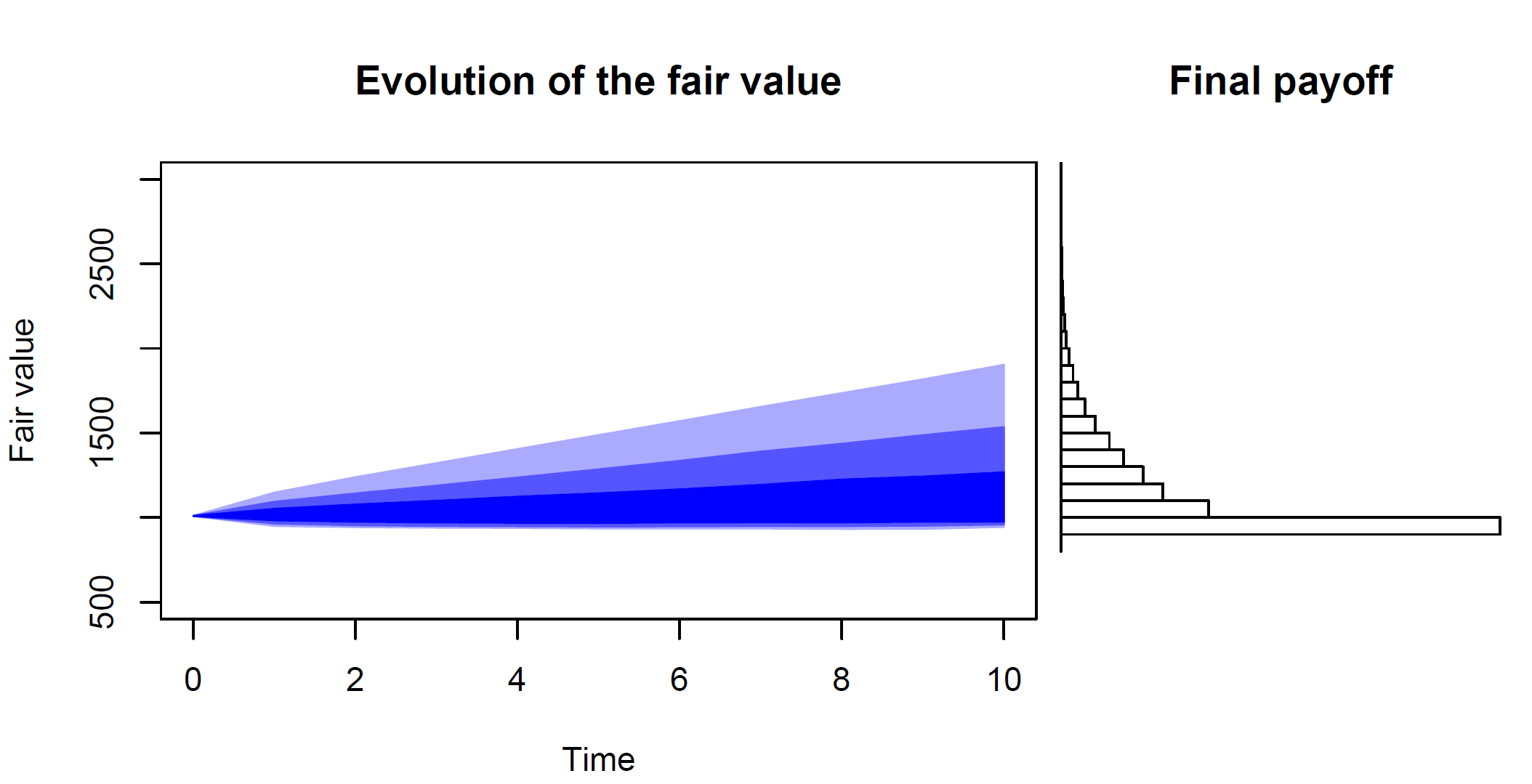

Actuarial-consistency and two-step actuarial valuations: a new paradigm to insurance valuation

Motivated by solvency regulations, the recent focus has been towards financial risks and the so-called market-consistent valuations. In this situation, the financial market is the main driver and actuarial risks only appear as the ‘second step’. This paper goes against the tide and introduces the concept of 𝒂𝒄𝒕𝒖𝒂𝒓𝒊𝒂𝒍-𝒄𝒐𝒏𝒔𝒊𝒔𝒕𝒆𝒏𝒕 valuations where actuarial risks are at the core of the valuation. We propose a 𝒕𝒘𝒐-𝒔𝒕𝒆𝒑 𝒂𝒄𝒕𝒖𝒂𝒓𝒊𝒂𝒍 𝒗𝒂𝒍𝒖𝒂𝒕𝒊𝒐𝒏 that is first driven by actuarial information and is actuarial-consistent. We also provide a detailed comparison between actuarial-consistent and market-consistent valuations

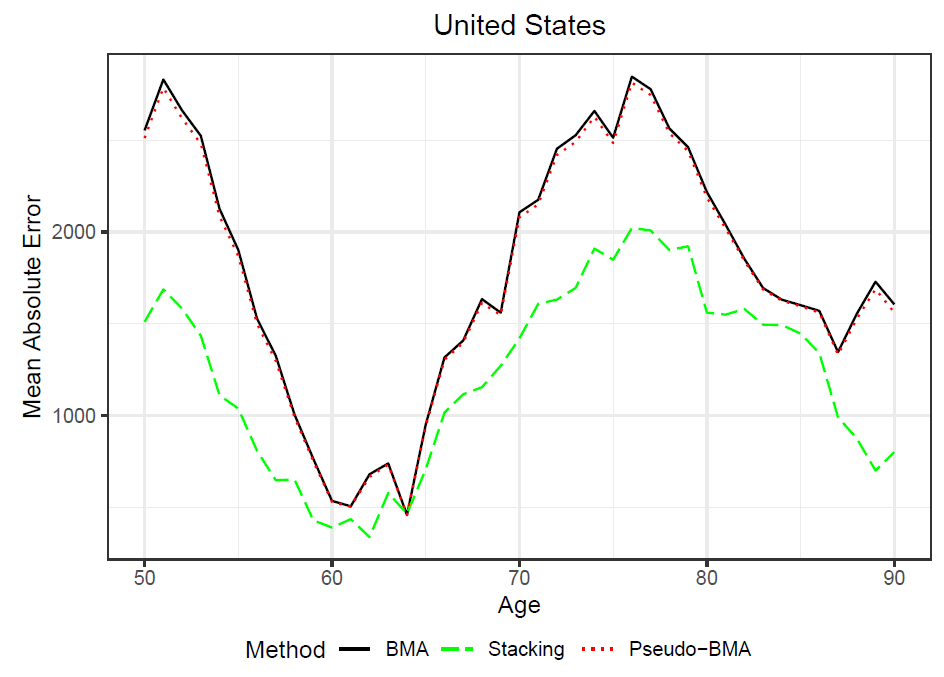

Bayesian model averaging for mortality forecasting using leave-future-out validation

Relying on one specific model can be too restrictive and lead to some well documented drawbacks including model misspecification, parameter uncertainty and overfitting. In this paper, we consider a Bayesian Negative-Binomial model to account for parameter uncertainty and overdispersion. Moreover, model averaging based on out-of-sample is considered as a response to model misspecifications and overfitting. Overall, we found that our approach outperforms the standard Bayesian model averaging in terms of prediction performance and robustness.

Parsimonious Predictive Mortality Modeling by Regularization and Cross-Validation with and without Covid-Type Effect

Most mortality models are very sensitive to the sample size or perturbations in the data. In this paper, we show how regularization and cross-validation can be used to smooth and forecast the mortality surface. In particular, our approach outperforms the P-spline model in terms of prediction and is much more robust when including Covid-type effect.

Insurance valuation: A two-step generalized regression approach

There is still an open debate on how to appropriately define a "fair" value and a risk margin for long-term insurance liabilities. In this paper, we discuss how solvency constraints can be efficiently included in the hedging process and the risk margin.

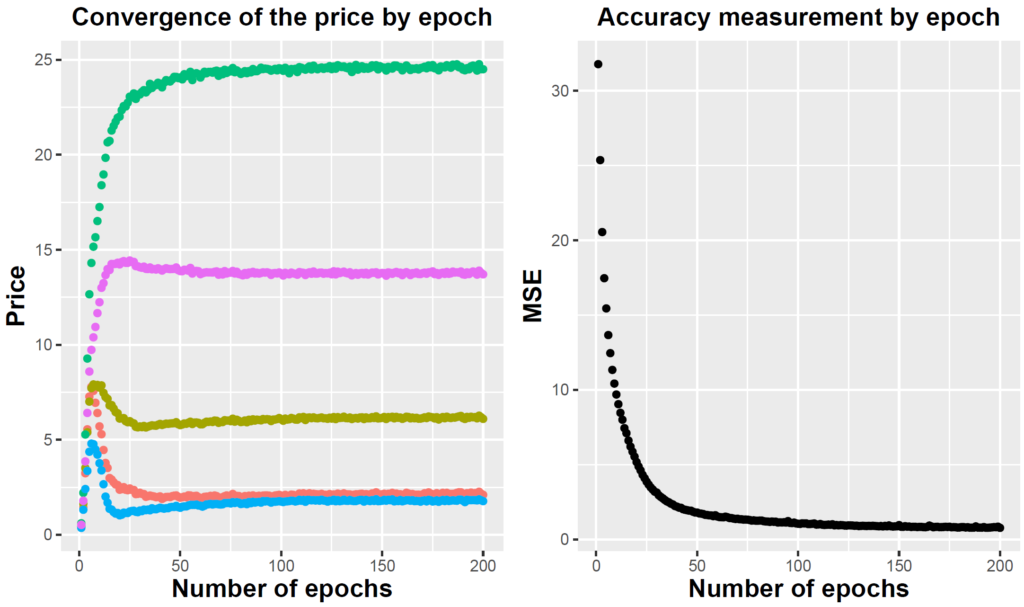

Pricing equity-linked insurance by neural networks

In this paper, we price a portfolio of equity-linked contracts in a general incomplete actuarial-financial market. We show that the pricing problem can be efficiently handled by the use of neural networks.